In cloud migration, also known as “move to cloud,” you move existing data processing tasks to a cloud platform, such as Amazon Web Services (AWS), Microsoft Azure, or Google Cloud Platform, to private clouds, and-or to hybrid cloud solutions.

See our blog post, What is Cloud Migration, for an introduction.

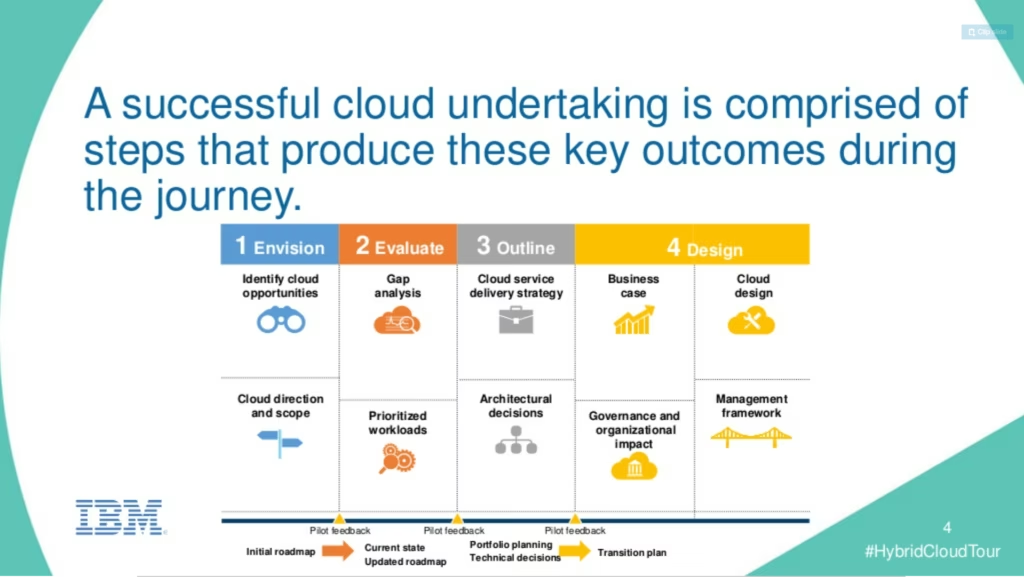

Figure 1: Steps in cloud migration. (Courtesy IBM, via Slideshare.)

Cloud migration usually includes most or all of the following steps:

- Identifying on-premises workloads to move to cloud

- Baselining performance and resource use before moving

- (Potentially) optimizing the workloads before the move

- Matching services/components to equivalents on different cloud platforms

- Estimating the cost and performance of the software, post-move

- Estimating the cost and schedule for the move

- Tracking and ensuring success of the migration

- Optimizing the workloads in the cloud

- Managing the workloads in the cloud

- Rinse and repeat

Some of the steps are interdependent; for instance, it helps to know the cost of moving various workloads when choosing which ones to move first. Both experiences with cloud migration and supportive tools can help in obtaining the best results.

The bigger the scale of the workloads you are considering moving to the cloud, the more likely you will be to want outside consulting help, useful tools, or both. With that in mind, it might be worthwhile to move a smaller workload or two first, so you can gain experience and decide how best to proceed for the larger share of the work.

We briefly describe each of the steps below, to help create a shared understanding for all the participants in a cloud migration project, and for executive decision-makers. We’ve illustrated the steps with screenshots from Unravel Data, which includes support for cloud migration as a major feature. The steps, however, are independent of any specific tool.

Note. You may decide, at any step, not to move a given workload to the cloud – or not to undertake cloud migration at all. Keep track of your results, as you complete each step, and evaluate whether the move is still a good idea.

The Relative Advantages of On-Premises and Cloud

Before starting a cloud migration project, it’s valuable to have a shared understanding of the relative advantages of on-premises installations vs. cloud platforms.

On-premises workloads tend to be centrally managed by a large and dedicated staff, with many years of expertise in all the various technologies and business challenges involved. Allocations of servers, software licenses, and operations people are budgeted in advance. Many organizations keep some or all of their workloads on-premises due to security concerns about the cloud, but these concerns may not always be well-founded.

On-premises hosting has high capital costs; long waits for new hardware, software updates, and configuration changes; and difficulties finding skilled people, including for supporting older technologies.

Cloud workloads, by contrast, are often managed in a more ad hoc fashion by small project teams. Servers and software licenses can be acquired instantly, though it still takes time to find skilled people to run them. (This is a major factor in the rise of DevOps & DataOps, as developers and operations people learn each other’s skills to help get things done.)

The biggest advantage of working in the cloud is flexibility, including reduced need for in-house staff. The biggest disadvantage is the flip side of that same flexibility: surprises as to costs. Costs can go up sharply and suddenly. It’s all too easy for a single complex query to cost many thousands of dollars to run, or for hundreds of thousands of dollars in costs to appear unexpectedly. Also, the skilled people needed to develop, deploy, maintain, and optimize cloud solutions are in short supply.

When it comes to building up an organization’s ability to use the cloud, fortune favors the bold. Organizations that develop a reputation as strong cloud shops are more able to attract the talent needed to get benefits from the cloud. Organizations which fall behind have trouble catching up.

Even some of these bold organizations, however, often keep a substantial on-premises footprint. Security, contract requirements with customers, lower costs for some workloads (especially stable, predictable ones), and high costs for migrating specific workloads to the cloud are among the reasons for keeping some workloads on-premises.

The Ten Steps To A Successful Cloud Migration

1. Identifying On-Premises Workloads to Move to Cloud

Typically, a large organization will run a wide variety of workloads, some on-premises, and some in the cloud. These workloads are likely to include:

- Third-party software and open source software hosted on company-owned servers; examples include Hadoop, Kafka, and Spark installations

- Software created in-house hosted on company-owned servers

- SaaS packages hosted on the SaaS provider’s servers

- Open source software, third-party software, and in-house software running on public cloud platform, private cloud, or hybrid cloud servers

The cloud migration motion is to examine software running on company-owned servers and either replace it with a SaaS offering, or move it to a public, private, or hybrid cloud platform.

2. Baselining Performance and Resource Use

It’s vital to analyze each on-premises workload that is being considered for move to cloud for performance and resource use. To move the workload successfully, similar resources in the cloud need to be identified and costed. And the new, cloud-based version must meet or exceed the performance of the on-premises original, while running at lower cost. (Or while gaining flexibility and other benefits that are deemed worth any cost increase.)

Baselining performance and resource use for each workload may need to include:

- Identifying the CPU and memory usage of the workload

- Identifying all elements in the software stack that supports the workload, on-premises

- Identifying dependencies, such as data tables used by a workload

- Identifying the most closely matching software elements available on each cloud platform that’s under consideration

- Identifying custom code that will need to be ported to the cloud

- Specifying work needed to adapt code to different supporting software available in the cloud (often older versions of the software that’s in use on-premises)

- Specifying work needed to adapt custom code to similar code platforms (for instance, modifying Hive SQL to a SQL version available in the cloud)

This work is easier if a target cloud platform has already been chosen, but you may need to compare estimates for your workloads on several cloud platforms before choosing.

3. (Potentially) Optimizing the Workloads, Pre-Move

GIGO – Garbage In, Garbage Out – is one of the older acronyms in computing. If a workload is a mess on-premises, and you move it, largely unchanged, to the cloud, then it will be a mess in the cloud as well.

If a workload runs poorly on-premises, that may be acceptable, given that the hardware and software licenses needed to run the workload are already paid for; the staff needed for operations are already hired and working. But in the cloud, you pay for resource use directly. Unoptimized workloads can become very expensive indeed.

Unfortunately, common practice is to not optimize workloads – and then implement a rough, rapid lift and shift, to meet externally imposed deadlines. This may be followed by shock and awe over the bills that are then generated by the workload in the cloud. If you take your time, and optimize before making a move, you’ll avoid trouble later.

4. Matching Services to Equivalents in the Cloud

Many organizations will have decided on a specific platform for some or all of their move to cloud efforts, and will not need to go through the selection process again for the cloud platform. In other cases, you’ll have flexibility, and will need to look at more than one cloud platform.

For each target cloud platform, you’ll need to choose the target cloud services you wish to use. The major cloud services providers offer a plethora of choices, usually closely matched to on-premises offerings. You are also likely to find third-party offerings that offer some combination of on-premises and multi-cloud functionality, such as Databricks and Snowflake on AWS and Azure.

The cloud platform and cloud services you choose will also dictate the amount of code revision you will have to do. One crucial detail: software versions in the cloud may be behind, or ahead of, the versions you are using on-premises, and this can necessitate considerable rework.

There are products out there to help automate some of this work, and specialized consultancies that can do it for you. Still, the cost and hassle involved may prevent you from moving some workloads – in the near term, or at all.

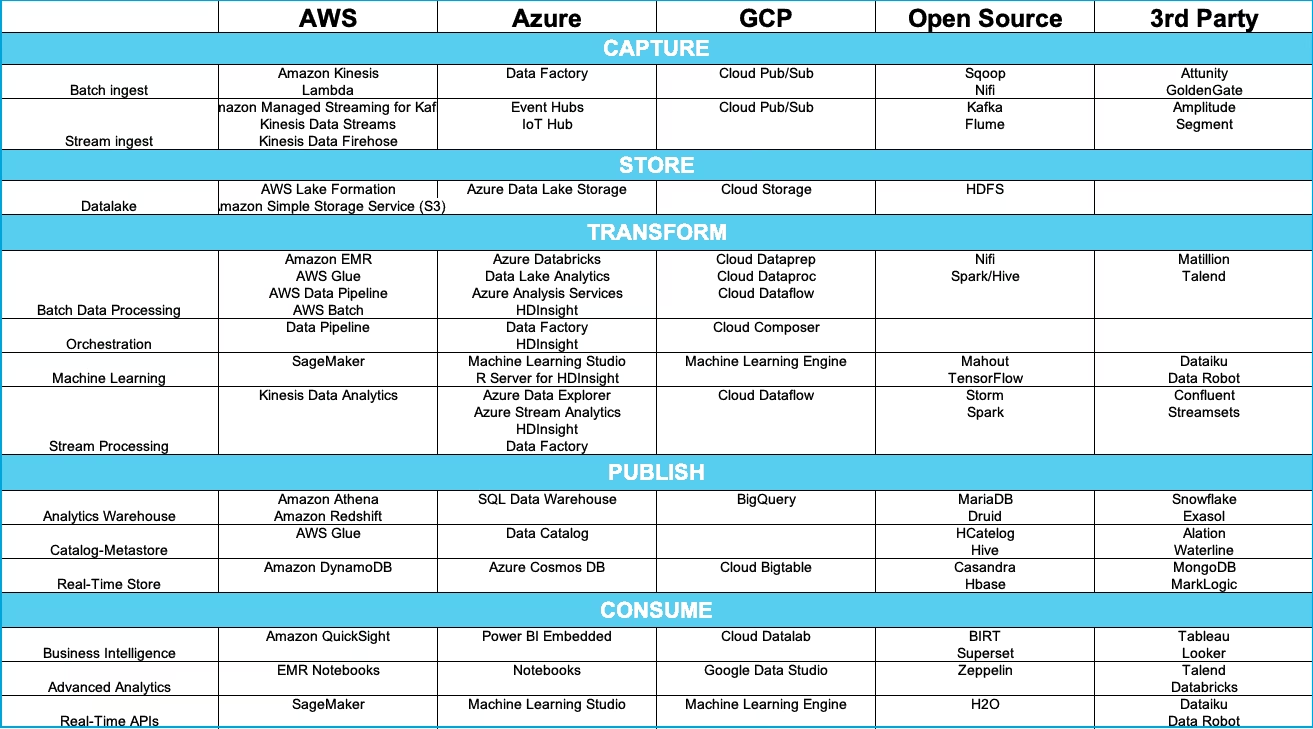

Software available on major cloud platforms is shown in Figure 2, from our blog post, Understanding Cloud Data Services (recommended).

Figure 2: Software available on major cloud platforms. (Subject to change)

5. Estimating the Cost and Performance of the Software, Post-Move

Now you can estimate the cost and performance of the software, once you’ve moved it to your chosen cloud platform and services. You need to estimate the instance sizes you’ll need and CPU costs, memory costs, and storage costs, as well as networking costs and costs for the services you use. Estimating all of this can be very difficult, especially if you haven’t done much cloud migration work in the past.

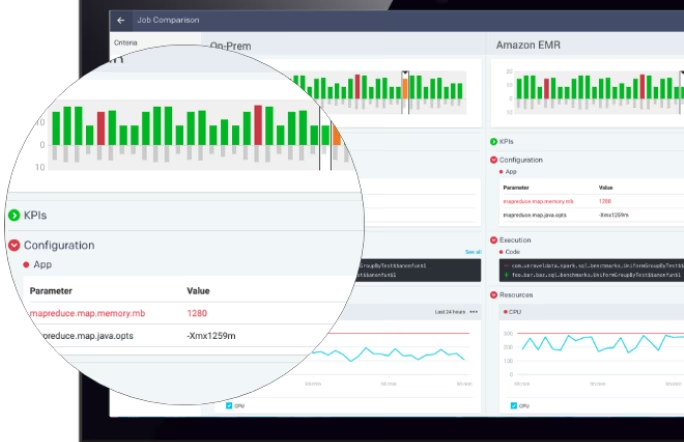

Unravel Data is a valuable tool here. It provides detailed estimates, which are likely to be far easier to get, and more accurate, than you could come up with on your own. (Through either estimation, experimentation, or both.) You need to install Unravel Data on your on-premises stack, then use it to generate comparisons across all cloud choices.

An example of a comparison between an on-premises installation and a targeted move to AWS EMR is shown in Figure 3.

Figure 3: On-premises to AWS EMR move comparison

6. Estimating the Cost and Schedule for the Move

After the above analysis, you need to schedule the move, and estimate the cost of the move itself:

- People time. How many people-hours will the move require? Which people, and what won’t get done while they’re working on the move?

- Calendar time. How many weeks or months will it take to complete all the necessary tasks, once dependencies are taken into account?

- Management time. How much management time and attention, including the time to complete the schedule and cost estimates, is being consumed by the move?

- Direct costs. What are the costs in person-hours? Are there costs for software acquisition, experimentation on the cloud platform, and lost opportunities while the work is being done?

Each of these figures is difficult to estimate, and each depends to some degree on the others. Do this as best you can, to make a solid business decision as to whether to proceed. (See the next step.)

7. Tracking and Ensuring Success of the Migration

We will spend very little digital “ink” here on the crux of the process: actually moving the workloads to the chosen cloud platform. It’s a lot of work, with many steps and sub-steps of its own.

However, all of those steps are highly contingent on the specific workloads you’re moving, and on the decisions you’ve made up to this point. (For instance, you may try a quick and dirty “lift and shift,” or you may take the time to optimize workloads first, on-premises, before making the move.)

So we will simply point out that this step is difficult and time-consuming. It’s also very easy to underestimate the time, cost, and heartburn attendant to this part of the process. As mentioned above, doing a small project first may help reduce problems later.

8. Optimizing the Workloads in the Cloud

This step is easy to ignore, but it may be the most important step of all in making the move a success. You don’t have to optimize workloads on-premises before moving them. But you must optimize the workloads in the cloud after the move. Optimization will improve performance, reduce costs, and help you significantly in achieving better results going forward.

Only after optimization of the workload in the cloud can you calculate an ROI for the project. The ROI you obtain may signal that move to cloud is a vital priority for your organization – or signal that the ancient mapmaker’s warning for mariners, Here Be Dragons, should be considered as you move workloads to the cloud.

Figure 4: Ancient map with sea monsters. (Source:Atlas Oscura.)

9. Managing the Workloads in the Cloud

You have to actively manage workloads in the cloud, or you’re likely to experience unexpected shocks as to cost. Actually, you’re going to get shocked; but if you manage actively, you’ll keep those shocks to a few days’ worth of bills. If you manage passively, your shocks will equate to one or several months’ worth of bills, which can lead you to question the entire basis of your move to cloud strategy.

Actively managing your workloads in the cloud can also help you identify further optimizations – both for operations in the cloud, and for each of the steps above. And those optimizations are crucial to calculating your ROI for this effort, and to successfully making future cloud migration decisions, one workload at a time.

10. Rinse and Repeat

Once you’ve successfully made your move to cloud decision pay off for one, or a small group of workloads, you’ll want to repeat it. Or, possibly, you’ve learned that move to cloud is not a good idea for many of your existing and planned workloads.

Just as one example, if you currently have a lot of your workloads on a legacy database vendor, you may find many barriers to moving workloads to the cloud. You may need to refactor, or even re-imagine, much of your data processing infrastructure before you can consider cloud migration for such workloads.

That’s the value of going through a process like this for a single, or a small set of workloads first: once you’ve done this, you will know much of what you previously didn’t know. And you will be rested and ready for the next steps in your move to cloud journey.

Unravel Data as a Force Multiplier for Move to Cloud

Unravel Data is designed to serve as a force multiplier in each step of a move to cloud journey. This is especially true for big data moves to cloud.

As the name implies, big data processing on-premises may be the most challenging set of operations when it comes to the potential for generating costs and resource allocation issues that are surprising, even shocking, as you pursue cloud migration.

Unravel Data has been engineered to help you solve these problems. Unravel Data can help you easily estimate the costs and challenges of cloud migration for on-premises workloads heading to AWS, to Microsoft Azure, and to Google Cloud Platform (GCP).

Unravel Data is optimized for big data technologies:

- On-premises (source): Includes Cloudera, Hadoop, Impala, Hive, Spark, and Kafka

- Cloud (destination): Similar technologies in the cloud, as well as AWS EMR, AWS Redshift, AWS Glue, AWS Athena, Azure HD Insight, Google Dataproc and Google BigQuery, plus Databricks and Snowflake, which run on multiple public clouds.

We will be adding many on-premises sources, and many cloud destinations, in the months ahead. Both relational databases (which natively support SQL) and NoSQL databases are included in our roadmap.

Unravel is available in each of the cloud marketplaces for AWS, Azure, and GCP. Among the many resources you can find about cloud migration are a video from AWS for using Unravel to move to Amazon EMR, and a blog post from Microsoft for moving big data workloads to Azure HDInsight with Unravel.

We hope you have enjoyed, and learned from, reading this blog post. If you want to know more, you can get a free health check report to unlock your data environment. or contact us.